Learning Center

writing

Develop school-wide/district-wide writing assessments

April 15, 2017

Many schools have already established a system for administering and scoring formal writing prompts. The scores from these common writing assessments are used to track student improvement and target future instruction.

For those schools on the front end of this process and just beginning to develop a common writing assessment, here are some things to consider along the way. For those schools looking to revise your current writing assessment procedures, here are some tips for making the process more efficient and effective.

Compare genres

When determining how many writing prompts to administer per year, also consider what writing genres (types of writing) you will assess. If you want to measure growth within the school year, then you need to have the same genre(s) assessed throughout that year. With the same genres you can measure growth from one point in the year to a later point. However, if you administer four different prompts (i.e. narrative, persuasive, compare-contrast, and descriptive) it’s hard to measure growth. You can hardly compare rubric scores when the writing genres are so drastically different. Consider an ABAB pattern. In other words, the four prompts could be personal narrative, compare-contrast, personal narrative, compare-contrast. Now you can track growth and improvement between the narratives and between the compare-contrast writings.

Vary topics

Although the prompt genres should repeat, don’t utilize the exact same prompt topic the second time. If the first descriptive prompt asked students to describe their favorite season, then the next descriptive prompt could ask students to describe a favorite room in their houses. You can compare writing scores because they are both the same type of writing (descriptive), but you haven’t asked students to write to the exact same topic. Utilizing the same topic gets monotonous for the students and often produces an almost-identical writing as the first one.

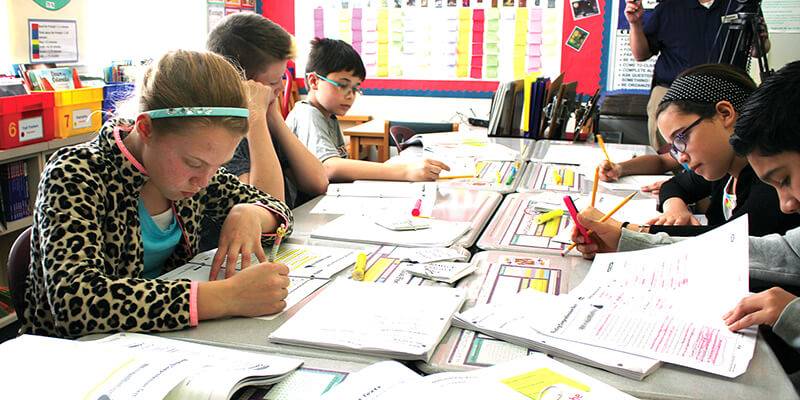

Establish procedures

The nature of a common writing assessment is that it is an on-demand, no-choice, one-sitting writing—just like most state assessments. Therefore, your in-house writing assessment should be a first-draft only. Students should not have time to revise over days, edit with peers, conference with the teacher, etc. With peer support, teacher input, and multiple days the writing is no longer indicative of what the student can do all by himself. For more accurate scoring data, these writing prompts should be first-draft, one-sitting writings.

Target instruction

Beyond all these suggestions, the most important facet to any school-wide/district-wide writing assessment has to be the teacher’s instructional implications after the assessment. What were the weaknesses that were across the whole class/grade-level that need to be addressed? What 3-5 skills will the teacher target heavily before the next common writing assessment? This is the notion of assessment driving instruction. If the teacher does nothing more than administer the prompts, score the prompts, turn in the numbers to the office, and wait for the next prompt opportunity, then the instructional purpose of these assessments is lost. The goal would be that the teacher intentionally targets specific weaknesses that were evident in the writings. These should be the basis for upcoming mini-lessons. Then, after the next round of writing assessments, determine if those weaknesses have improved, and then identify new skills to troubleshoot.